|

In my first Pushing the Limits of Windows post, I discussed physical memory limits, including the limits imposed by licensing, implementation, and driver compatibility. Here’s the index of the entire Pushing the Limits series. While they can stand on their own, they assume that you read them in order.

1.virtual memory too low, virtual memory paging is being increased I find this message when I, the comp administrator, logs on sometimes and am told some things may now be available while virtual memory paging is being increased. You are receiving virtual memory errors like the one below. “Your system is low on virtual memory. Windows is increasing the size of your virtual memory paging file. During this process, memory requests for some applications may be denied. For more information, see Helpâ€.

This time I’m turning my attention to another fundamental resource, virtual memory. Virtual memory separates a program’s view of memory from the system’s physical memory, so an operating system decides when and if to store the program’s code and data in physical memory and when to store it in a file. The major advantage of virtual memory is that it allows more processes to execute concurrently than might otherwise fit in physical memory.

While virtual memory has limits that are related to physical memory limits, virtual memory has limits that derive from different sources and that are different depending on the consumer. For example, there are virtual memory limits that apply to individual processes that run applications, the operating system, and for the system as a whole. It's important to remember as you read this that virtual memory, as the name implies, has no direct connection with physical memory. Windows assigning the file cache a certain amount of virtual memory does not dictate how much file data it actually caches in physical memory; it can be any amount from none to more than the amount that's addressable via virtual memory.

Process Address Spaces

Each process has its own virtual memory, called an address space, into which it maps the code that it executes and the data that the code references and manipulates. A 32-bit process uses 32-bit virtual memory address pointers, which creates an absolute upper limit of 4GB (2^32) for the amount of virtual memory that a 32-bit process can address. However, so that the operating system can reference its own code and data and the code and data of the currently-executing process without changing address spaces, the operating system makes its virtual memory visible in the address space of every process. By default, 32-bit versions of Windows split the process address space evenly between the system and the active process, creating a limit of 2GB for each:

Applications might use Heap APIs, the .NET garbage collector, or the C runtime malloc library to allocate virtual memory, but under the hood all of these rely on the VirtualAlloc API. When an application runs out of address space then VirtualAlloc, and therefore the memory managers layered on top of it, return errors (represented by a NULL address). The Testlimit utility, which I wrote for the 4th Edition of Windows Internals to demonstrate various Windows limits, calls VirtualAlloc repeatedly until it gets an error when you specify the –r switch. Thus, when you run the 32-bit version of Testlimit on 32-bit Windows, it will consume the entire 2GB of its address space:

2010 MB isn’t quite 2GB, but Testlimit’s other code and data, including its executable and system DLLs, account for the difference. You can see the total amount of address space it’s consumed by looking at its Virtual Size in Process Explorer:

Some applications, like SQL Server and Active Directory, manage large data structures and perform better the more that they can load into their address space at the same time. Windows NT 4 SP3 therefore introduced a boot option, /3GB, that gives a process 3GB of its 4GB address space by reducing the size of the system address space to 1GB, and Windows XP and Windows Server 2003 introduced the /userva option that moves the split anywhere between 2GB and 3GB:

To take advantage of the address space above the 2GB line, however, a process must have the ‘large address space aware’ flag set in its executable image. Access to the additional virtual memory is opt-in because some applications have assumed that they’d be given at most 2GB of the address space. Since the high bit of a pointer referencing an address below 2GB is always zero, they would use the high bit in their pointers as a flag for their own data, clearing it of course before referencing the data. If they ran with a 3GB address space they would inadvertently truncate pointers that have values greater than 2GB, causing program errors including possible data corruption.

All Microsoft server products and data intensive executables in Windows are marked with the large address space awareness flag, including Chkdsk.exe, Lsass.exe (which hosts Active Directory services on a domain controller), Smss.exe (the session manager), and Esentutl.exe (the Active Directory Jet database repair tool). You can see whether an image has the flag with the Dumpbin utility, which comes with Visual Studio:

Testlimit is also marked large-address aware, so if you run it with the –r switch when booted with the 3GB of user address space, you’ll see something like this:

Because the address space on 64-bit Windows is much larger than 4GB, something I’ll describe shortly, Windows can give 32-bit processes the maximum 4GB that they can address and use the rest for the operating system’s virtual memory. If you run Testlimit on 64-bit Windows, you’ll see it consume the entire 32-bit addressable address space:

64-bit processes use 64-bit pointers, so their theoretical maximum address space is 16 exabytes (2^64). However, Windows doesn’t divide the address space evenly between the active process and the system, but instead defines a region in the address space for the process and others for various system memory resources, like system page table entries (PTEs), the file cache, and paged and non-paged pools.

The size of the process address space is different on IA64 and x64 versions of Windows where the sizes were chosen by balancing what applications need against the memory costs of the overhead (page table pages and translation lookaside buffer - TLB - entries) needed to support the address space. On x64, that’s 8192GB (8TB) and on IA64 it’s 7168GB (7TB - the 1TB difference from x64 comes from the fact that the top level page directory on IA64 reserves slots for Wow64 mappings). On both IA64 and x64 versions of Windows, the size of the various resource address space regions is 128GB (e.g. non-paged pool is assigned 128GB of the address space), with the exception of the file cache, which is assigned 1TB. The address space of a 64-bit process therefore looks something like this:

The figure isn’t drawn to scale, because even 8TB, much less 128GB, would be a small sliver. Suffice it to say that like our universe, there’s a lot of emptiness in the address space of a 64-bit process.

When you run the 64-bit version of Testlimit (Testlimit64) on 64-bit Windows with the –r switch, you’ll see it consume 8TB, which is the size of the part of the address space it can manage:

Committed Memory

Testlimit’s –r switch has it reserve virtual memory, but not actually commit it. Reserved virtual memory can’t actually store data or code, but applications sometimes use a reservation to create a large block of virtual memory and then commit it as needed to ensure that the committed memory is contiguous in the address space. When a process commits a region of virtual memory, the operating system guarantees that it can maintain all the data the process stores in the memory either in physical memory or on disk. That means that a process can run up against another limit: the commit limit.

As you’d expect from the description of the commit guarantee, the commit limit is the sum of physical memory and the sizes of the paging files. In reality, not quite all of physical memory counts toward the commit limit since the operating system reserves part of physical memory for its own use. The amount of committed virtual memory for all the active processes, called the current commit charge, cannot exceed the system commit limit. When the commit limit is reached, virtual allocations that commit memory fail. That means that even a standard 32-bit process may get virtual memory allocation failures before it hits the 2GB address space limit.

The current commit charge and commit limit is tracked by Process Explorer in its System Information window in the Commit Charge section and in the Commit History bar chart and graph:

Task Manager prior to Vista and Windows Server 2008 shows the current commit charge and limit similarly, but calls the current commit charge 'PF Usage' in its graph:

On Vista and Server 2008, Task Manager doesn't show the commit charge graph and labels the current commit charge and limit values with 'Page File' (despite the fact that they will be non-zero values even if you have no paging file):

You can stress the commit limit by running Testlimit with the -m switch, which directs it to allocate committed memory. The 32-bit version of Testlimit may or may not hit its address space limit before hitting the commit limit, depending on the size of physical memory, the size of the paging files and the current commit charge when you run it. If you're running 32-bit Windows and want to see how the system behaves when you hit the commit limit, simply run multiple instances of Testlimit until one hits the commit limit before exhausting its address space.

Note that, by default, the paging file is configured to grow, which means that the commit limit will grow when the commit charge nears it. And even when when the paging file hits its maximum size, Windows is holding back some memory and its internal tuning, as well as that of applications that cache data, might free up more. Testlimit anticipates this and when it reaches the commit limit, it sleeps for a few seconds and then tries to allocate more memory, repeating this indefinitely until you terminate it.

If you run the 64-bit version of Testlimit, it will almost certainly will hit the commit limit before exhausting its address space, unless physical memory and the paging files sum to more than 8TB, which as described previously is the size of the 64-bit application-accessible address space. Here's the partial output of the 64-bit Testlimit running on my 8GB system (I specified an allocation size of 100MB to make it leak more quickly):

And here's the commit history graph with steps when Testlimit paused to allow the paging file to grow:

Virtual Memory Too Low

When system virtual memory runs low, applications may fail and you might get strange error messages when attempting routine operations. In most cases, though, Windows will be able present you the low-memory resolution dialog, like it did for me when I ran this test:

After you exit Testlimit, the commit limit will likely drop again when the memory manager truncates the tail of the paging file that it created to accommodate Testlimit's extreme commit requests. Here, Process Explorer shows that the current limit is well below the peak that was achieved when Testlimit was running:

Process Committed Memory

Because the commit limit is a global resource whose consumption can lead to poor performance, application failures and even system failure, a natural question is 'how much are processes contributing the commit charge'? To answer that question accurately, you need to understand the different types of virtual memory that an application can allocate.

Not all the virtual memory that a process allocates counts toward the commit limit. As you've seen, reserved virtual memory doesn't. Virtual memory that represents a file on disk, called a file mapping view, also doesn't count toward the limit unless the application asks for copy-on-write semantics, because Windows can discard any data associated with the view from physical memory and then retrieve it from the file. The virtual memory in Testlimit's address space where its executable and system DLL images are mapped therefore don't count toward the commit limit. There are two types of process virtual memory that do count toward the commit limit: private and pagefile-backed.

Private virtual memory is the kind that underlies the garbage collector heap, native heap and language allocators. It's called private because by definition it can't be shared between processes. For that reason, it's easy to attribute to a process and Windows tracks its usage with the Private Bytes performance counter. Process Explorer displays a process private bytes usage in the Private Bytes column, in the Virtual Memory section of the Performance page of the process properties dialog, and displays it in graphical form on the Performance Graph page of the process properties dialog. Here's what Testlimit64 looked like when it hit the commit limit:

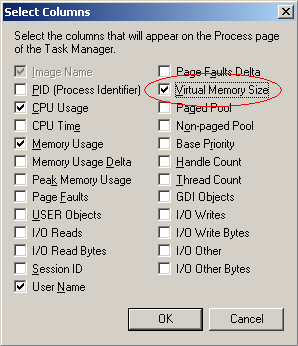

Pagefile-backed virtual memory is harder to attribute, because it can be shared between processes. In fact, there's no process-specific counter you can look at to see how much a process has allocated or is referencing. When you run Testlimit with the -s switch, it allocates pagefile-backed virtual memory until it hits the commit limit, but even after consuming over 29GB of commit, the virtual memory statistics for the process don't provide any indication that it's the one responsible:

For that reason, I added the -l switch to Handle a while ago. A process must open a pagefile-backed virtual memory object, called a section, for it to create a mapping of pagefile-backed virtual memory in its address space. While Windows preserves existing virtual memory even if an application closes the handle to the section that it was made from, most applications keep the handle open. The -l switch prints the size of the allocation for pagefile-backed sections that processes have open. Here's partial output for the handles open by Testlimit after it has run with the -s switch:

You can see that Testlimit is allocating pagefile-backed memory in 1MB blocks and if you summed the size of all the sections it had opened, you'd see that it was at least one of the processes contributing large amounts to the commit charge.

How Big Should I Make the Paging File?

Perhaps one of the most commonly asked questions related to virtual memory is, how big should I make the paging file? There’s no end of ridiculous advice out on the web and in the newsstand magazines that cover Windows, and even Microsoft has published misleading recommendations. Almost all the suggestions are based on multiplying RAM size by some factor, with common values being 1.2, 1.5 and 2. Now that you understand the role that the paging file plays in defining a system’s commit limit and how processes contribute to the commit charge, you’re well positioned to see how useless such formulas truly are.

Since the commit limit sets an upper bound on how much private and pagefile-backed virtual memory can be allocated concurrently by running processes, the only way to reasonably size the paging file is to know the maximum total commit charge for the programs you like to have running at the same time. If the commit limit is smaller than that number, your programs won’t be able to allocate the virtual memory they want and will fail to run properly.

So how do you know how much commit charge your workloads require? You might have noticed in the screenshots that Windows tracks that number and Process Explorer shows it: Peak Commit Charge. To optimally size your paging file you should start all the applications you run at the same time, load typical data sets, and then note the commit charge peak (or look at this value after a period of time where you know maximum load was attained). Set the paging file minimum to be that value minus the amount of RAM in your system (if the value is negative, pick a minimum size to permit the kind of crash dump you are configured for). If you want to have some breathing room for potentially large commit demands, set the maximum to double that number.

Some feel having no paging file results in better performance, but in general, having a paging file means Windows can write pages on the modified list (which represent pages that aren’t being accessed actively but have not been saved to disk) out to the paging file, thus making that memory available for more useful purposes (processes or file cache). So while there may be some workloads that perform better with no paging file, in general having one will mean more usable memory being available to the system (never mind that Windows won’t be able to write kernel crash dumps without a paging file sized large enough to hold them).

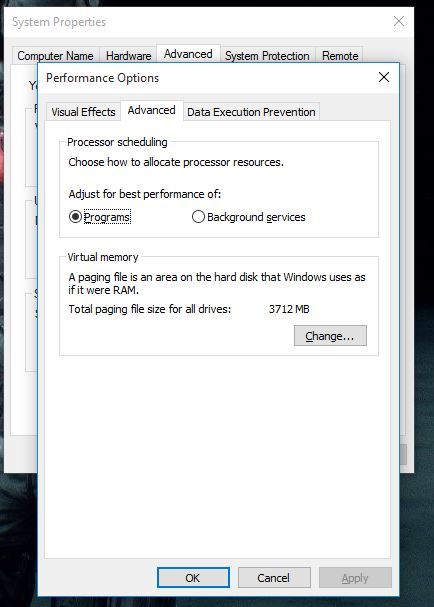

Paging file configuration is in the System properties, which you can get to by typing “sysdm.cpl†into the Run dialog, clicking on the Advanced tab, clicking on the Performance Options button, clicking on the Advanced tab (this is really advanced), and then clicking on the Change button:

You’ll notice that the default configuration is for Windows to automatically manage the page file size. When that option is set on Windows XP and Server 2003, Windows creates a single paging file that’s minimum size is 1.5 times RAM if RAM is less than 1GB, and RAM if it's greater than 1GB, and that has a maximum size that's three times RAM. On Windows Vista and Server 2008, the minimum is intended to be large enough to hold a kernel-memory crash dump and is RAM plus 300MB or 1GB, whichever is larger. The maximum is either three times the size of RAM or 4GB, whichever is larger. That explains why the peak commit on my 8GB 64-bit system that’s visible in one of the screenshots is 32GB. I guess whoever wrote that code got their guidance from one of those magazines I mentioned!

A couple of final limits related to virtual memory are the maximum size and number of paging files supported by Windows. 32-bit Windows has a maximum paging file size of 16TB (4GB if you for some reason run in non-PAE mode) and 64-bit Windows can having paging files that are up to 16TB in size on x64 and 32TB on IA64. Windows 8 ARM’s maximum paging file size is is 4GB. For all versions, Windows supports up to 16 paging files, where each must be on a separate volume.

Does anyone other than me think that the concept of virtual memory (using hard disk space to make up for what the system lacks in RAM) is archaic? Ten years ago, when memory was $100 per megabyte, virtual memory was a true necessity. Today though, you can walk into any electronics store in America and get a PC with over a gigabyte of RAM. Physical memory is no longer in short supply, and yet the Windows operating system still uses virtual memory.

Technically speaking, I guess virtual memory isn't a true requirement since you can disable it. However, Windows systems tend not to run very well without the aide of virtual memory. In fact, if you configure the machine's virtual memory incorrectly, you can encounter some serious stability problems. In this article, I will talk about how Windows uses virtual memory and how you can go about configuring Windows Server 2003 to make the best use of your machine's physical and virtual memory.

Virtual memory was invented at a time when physical memory was very expensive. The idea was to use hard disk space to supplement the amount of memory that's available to the system. Although this process works, using virtual memory is extremely inefficient for several reasons.

Microsoft Windows Virtual Memory Too Low School

For starters, Windows can not process data that's residing in virtual memory. If Windows needs to do something with data that's in virtual memory, it must move the page containing the needed data from virtual memory into physical memory. The problem is that the physical memory may already be full. Therefore, Windows has to use a technique called paging. Paging is the process of locating a page of data in physical memory that is not currently being used and transferring it to virtual memory to make room for the needed page to be moved from physical memory to virtual memory.

Paging really slows down a system. Windows must use CPU cycles and even a portion of RAM just to manage the paging process. Furthermore, hard disk access times are measured in milliseconds, as opposed to RAM access times which are measured in nanoseconds. To put it simply, the hard disk is a whole lot slower than RAM, and the system wastes a lot of resources and takes a lot of time to move pages of data back and fourth between memory and virtual memory.

If a system becomes too low on RAM then thrashing can occur. Thrashing is a term for nearly constant paging. You have probably seen at least one situation in which a system's hard disk was running constantly with no visible results and a very sluggish response time. Chances are that this system was probably thrashing.

As much as virtual memory usage tends to slow down your system though, Windows is designed to use it. Windows uses a file named pagefile.sys for virtual memory. By default, this file (and therefore the system's virtual memory) is set to 1.5 times the size of the system's physical memory. For example, if a server has a gigabyte of RAM, then the initial pagefile size will be one and a half gigabytes.

Notice in that paragraph that I said that the initial size of the page file will be a gig and a half. If the system has insufficient memory, or if your server is running demanding or leaky applications, then Windows may expand the size of the page file to prevent the system from running out of memory. This is where things get tricky. There is a very fine balance between not giving Windows enough space for paging, and giving the operating system too big of a page file.

As you read the remainder of this article, you may find yourself wanting to make adjustments to the Windows pagefile. Therefore, I want to show you up front how to change the pagefile size. For the purposes of this article, I am assuming that you are running the 32-bit version of Windows Server 2003.

To set the size of the Windows page file, select the System option from the Windows Control Panel. When the System Properties sheet appears, select the Advanced tab. At this point, locate the tab's Performance section and click the Settings button. This will cause Windows to open the Performance Options properties sheet. Select the properties sheet's Advanced tab and click the Change button. You will now see the dialog box shown in Figure A.

If you look at the figure, you will notice that all of my system's drives are listed, but that the page file exists on the C: volume. If you are really interested in boosting your system's performance, then C: is usually not the best place for the page file. You want to put your page file in the place where Windows will be able to read and write data with the greatest throughput. There are a few things to consider when choosing a location. For example, you should consider the speed of the hard disk, but also how heavily that disk is used. Having a fast drive does you no good if it is already in constant use. Keep in mind that there is a big difference between hard drives and volumes on a hard drive. It will do you little good to put a page file on a seldom used volume on a high speed drive if the drive contains another volume that's heavily used.

Windows will actually allow you to get creative with virtual memory usage. You could for example, place three small page files on three different volumes rather than having one large pagefile. Windows generally tends to perform better if you use a single pagefile though. I have also known people to span a pagefile across a stripe set for better performance. This will work, but you need to make sure that the stripe set is accessible during the boot phase.

Just below the listing of the system's volumes is an area for you to set the minimum and maximum page file sizes. The minimum size should be 1.5 times the size of the physical memory. The maximum size can usually be set to about double the physical RAM, but demanding or leaky applications can cause you to need a larger pagefile. Don't forget to adjust the minimum page file size if you add memory to your server.

Microsoft Windows Virtual Memory Too Low Speed

Windows includes a tool called the Performance Monitor that you can use to determine how well various parts of the system are performing. There are lots of ways that you can use the Performance Monitor to determine how well the currently allocated virtual memory is meeting the system's needs. Performance Monitor offers hundreds if not thousands of different performance counters, and many of these can be used in some way to gauge memory and virtual memory performance. However, I am a big believer in keeping things simple when you can. It is possible to determine how well the currently allocated virtual memory is meeting the system's needs by watching just a few different Performance Monitor counters.

I recommend beginning by opening the Performance Monitor (it's on the Administrative Tools menu) and using the X icon to remove the default counters. After doing so, click the + icon to access the Add Counters dialog box, shown in Figure B.

The Add Counters dialog box is used to select the Performance Monitor counters that you want to watch. To select a counter, start by selecting the appropriate Performance Object. The performance object is basically just a category of counters. Once you select the correct performance object, select the desired counter and click Add. Once you have added the necessary counters to the Performance Monitor, click close.

The first two counters that you should monitor are the Available Bytes counter (in the Memory performance object), and the % Usage counter (in the Paging File performance object) counter. Once you add these counters, the Performance Monitor will look something like what you see in Figure C.

The Available Bytes counter tells you how much RAM the system presently has to work with. The number that the Performance Monitor gives you is in bytes. Therefore, you will have to divide the number by 1024 to get the number of available kilobytes, and divide by 1024 again to get the number of available megabytes. Your system should never, under any circumstances, have less than four megabytes of available memory. Four megabytes or less indicates a serious problem and you need to add memory to the server or free up some memory by shutting down some services.

The % usage counter indicates the percentage of your pagefile that is currently being used. Around 40% to 50% is usually a good number. If eighty percent or more of the pagefile is in use then you probably need to add more memory to the server and / or increase the size of the page file. On the flip side, if only about 20% or less of the page file is in use then you are probably wasting system resources with an unnecessarily large page file.

If the server that you are working with happens to be running Microsoft Exchange Server 2003, then there are a few other counters that I also recommend watching. The reason for this is because Exchange Server is a particularly demanding application and is notorious for fragmenting the pagefile. In an Exchange environment, it doesn't matter how much space within the pagefile is free if the pagefile is fragmented and does not have large enough blocks of free space to accommodate the necessary data. Exchange Server 2003 is less prone to pagefile fragmentation than Exchange 2000 was, but these counters are still worth keeping an eye on.

The counters that you will want to focus on are all found in the MSExchangeIS performance object. The counters themselves are VM Largest Block Size, VM Total 16 MB Free Blocks, VM Total Large Free Block Bytes.

The VM Largest Block Size counter indicates the size of the largest free block of virtual memory. This value should never fall below 32 MB. The VM Total 16 MB Free Blocks counter tells you how many blocks of space within the page file are at least 16 MB in size. This value should never fall below three. The VM Total Large Free Block Bytes counter provides you with the sum of the available bytes found in free blocks of space that are 16 MB or larger in size. This value should never fall below 50 MB. If any of these counters exceed their threshold values then you should add some memory to the server and increase the size of the pagefile.

So far I have talked mostly about the way that Windows uses virtual memory. However, the way that Windows uses virtual memory is a direct reflection of the way that Windows is using physical memory. By default, Windows uses something called the 2 GB memory model. As you probably know, 32-bit operating systems can address up to 4 GB of RAM. In Windows, the 4 GB address space is split evenly into two 2 GB address spaces. 2 GB of address spacer is used by the Windows operating system and 2 GB is used for user mode processes (applications).

You might have seen some Windows Server deployments in which there was a /3GB switch used in the server's BOOT.INI file. What the /3GB switch does is to allocate 1 GB of address space to the Windows operating system and 3 GB of address space to user mode processes. This allows Windows to better accommodate demanding applications such as Exchange Server.

Before I go on, I want to point out that the /3GB switch should be used sparingly. After all, Microsoft implemented the 2 GB memory model for a reason. There are consequences to depriving Windows of a gig of address space. Windows relies on a mechanism called Page Table Entries (PTEs) for allocating memory to the operating system and to the applications running on it. If you use the /3GB switch, Windows forfeits most of its PTE space. This isn't usually a problem if you are running a single, high demand application (such as an Exchange Server that is hosting 50 or more mailboxes). However, if you are running multiple applications or if your applications are not extremely demanding then you should stick to using the 2 GB memory model. Otherwise, there is a good chance that you could run the system out of PTEs. When available PTEs start getting low, Windows starts becoming instable. Microsoft recommends that you never use the /3GB switch on a domain controller or on a Small Business Server.

In case you are wondering, there is a way to find out how many PTEs your system has available. You can use the Performance Monitor's Free System Page Table Entries counter (found in the Memory performance object). The PTEs should never drop below 7,000. As the number of available PTEs approaches 7,000 the system becomes less stable.

So what happens if you need the /3GB switch, but you are running low on PTEs? Well, there is a trick that you can use to allocate more PTEs to the system, but this technique is not supported by Microsoft. To increase the number of PTEs, you can add the /USERVA=3030 switch to the BOOT.INI file. This will allocate an additional 42 MB of space to the page table entries.

You can experiment with the number assigned to the USERVA switch. The lower the number, the more space that gets allocated to the page table entries. There are five fundamental rules that you must abide by when using the USERVA switch:

Microsoft Windows Virtual Memory Too Low How To Fix

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

- Blog

- Home

- Download Game Gratis Untuk Laptop Perang Teluk

- Download Transformers Galaxy Force Sub Indo Film

- Resun Dms 500pl Manual

- Java Enterprise Edition Pdf

- Exercise Corrigo Java Pdf Tutorial For Beginners

- Bolcom Ghost Rags Pdf

- Download Free Acurus P10 Phono Preamp Manual Meat

- Ar Tonelico 2 Melody Of Metafalica Undub Iso Download

- East West Quantum Leap Gypsy Vst Free Download

- Monster Truck Madness Windows 7

- Sims 4 Seasons Mod

- Rat Pack Greatest Hits Rar

- Odia Song143 I Love U.in

- Microsoft Word Code 128 Barcode Excel

- Berliner Testament Muster Pdf Reader

- Fixfoto 3 0 Keygen Free

- Geoffrey Bawa The Complete Works Pdf Creator

- Installshield Limited Edition For Microsoft Visual Studio

- Blog

- Home

- Download Game Gratis Untuk Laptop Perang Teluk

- Download Transformers Galaxy Force Sub Indo Film

- Resun Dms 500pl Manual

- Java Enterprise Edition Pdf

- Exercise Corrigo Java Pdf Tutorial For Beginners

- Bolcom Ghost Rags Pdf

- Download Free Acurus P10 Phono Preamp Manual Meat

- Ar Tonelico 2 Melody Of Metafalica Undub Iso Download

- East West Quantum Leap Gypsy Vst Free Download

- Monster Truck Madness Windows 7

- Sims 4 Seasons Mod

- Rat Pack Greatest Hits Rar

- Odia Song143 I Love U.in

- Microsoft Word Code 128 Barcode Excel

- Berliner Testament Muster Pdf Reader

- Fixfoto 3 0 Keygen Free

- Geoffrey Bawa The Complete Works Pdf Creator

- Installshield Limited Edition For Microsoft Visual Studio

RSS Feed

RSS Feed